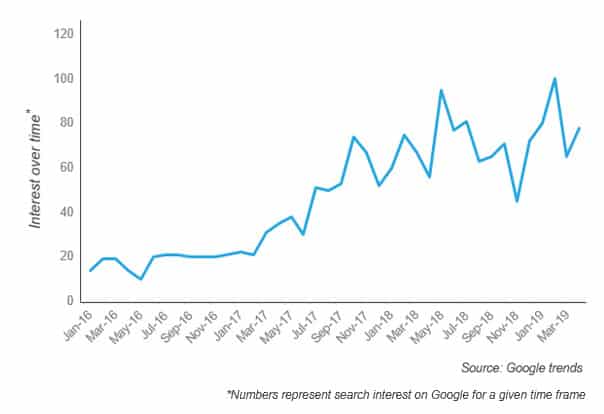

Interest in edge computing – which moves data storage, computing, and networking closer to the point of data generation/consumption – has grown significantly over the past several years (as highlighted in the Google Trends search interest chart below). This is because of its ability to reduce latency, lower the cost of data transmission, enhance data security, and reduce pressure on bandwidth.

But, as discussions around edge computing have increased, so have misconceptions around the potential applications and benefits of this computing architecture. Here are a few myths that we’ve encountered during discussions with enterprises.

Myth 1: Edge computing is just an idea on the drawing board

Although some believe that edge computing is still in the experimental stages with no practical applications, many supply-side players have already made significant investments in bringing new solutions and offerings to the market. For example, Vapor IO is building a network of decentralized data centers to power edge computing use cases. Saguna is building capabilities in multi access edge computing. Swim.ai allows developers to create streaming applications in real time to process data from connected devices locally. Leading cloud computing players, including Amazon, Google, and Microsoft, are all offering their own edge computing platform. Dropbox formed its edge network to give its customers faster access to their files. And Facebook, Netflix, and Twitter use edge computing for content delivery.

With all these examples, it’s clear that edge computing has advanced well beyond the drawing board.

Myth 2: Edge computing supports only IoT use cases

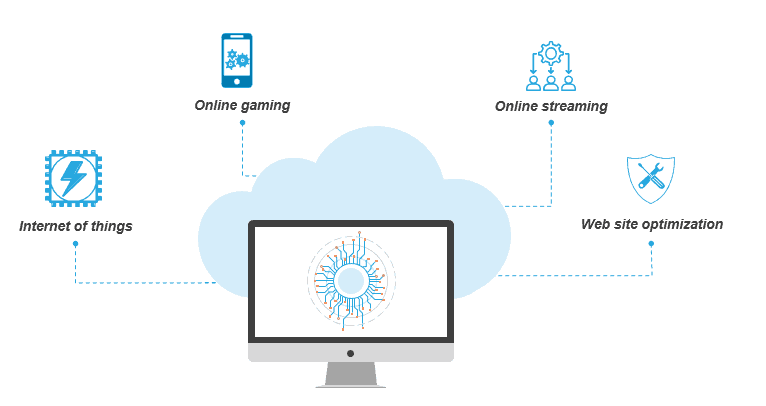

Processing data on a connected device, such as a surveillance camera, to enable real-time decision making is one of the most common use cases of edge computing. This Internet of Things (IoT) context is what brought edge computing to the center stage, and understandably so. Indeed, our report on IoT Platforms highlights how edge analytics capabilities serve as a key differentiator for leading IoT platform vendors.

However, as detailed in our recently published Edge Computing white paper, the value and role of edge computing extends far beyond IoT.

For example, in online streaming, it makes HD content delivery and live streaming latency free. Its real-time data transfer ability counters what’s often called “virtual reality sickness” in online AR/VR-based gaming. And its use of local infrastructure can help organizations optimize their web sites. For example, faster payments processing will directly increase an e-commerce company’s revenue.

Myth 3: Real-time decision making is the only driver for edge computing

There’s no question that one of edge computing’s key value propositions is its ability to enable real-time decisions. But there are many more use cases in which it adds value beyond reduced latency.

For example, its ability to enhance data security helps manufacturing firms protect sensitive and sometimes highly confidential information. Video surveillance, where cameras constantly capture images for analysis, can generate hundreds of petabytes of data every day. Edge computing eases bandwidth pressure and significantly reduces costs. And when connected devices operate in environments with intermittent to no connectivity, it can process data locally.

Myth 4: Edge spells doom for cloud computing

Much of the talk around edge computing presents that the current cloud computing architecture is not suited to power new age use cases and technologies. This has led to attention grabbing headlines about edge spelling the doom of cloud computing, with developers moving all their applications to the edge. However, edge and cloud computing share a symbiotic relationship. Edge is best suited to run workloads that are less data intensive and require real-time analysis. These include streaming analytics, running the inference phase for machine learning (ML) algorithms, etc. Cloud, on the other hand, powers edge computing by running data intensive workloads such as training the ML algorithms, maintaining databases related to end-user accounts, etc. For example, in the case of autonomous cars, edge enables real-time decision making related to obstacle recognition while cloud stores long-term data to train the car software to learn to identify and classify obstacles. Clearly, edge and cloud computing cannot be viewed in exclusion to each other.

To learn more about edge computing and to discover our decision-making framework for adopting edge computing, please read our Edge Computing white paper.