I recently came across a news article that said doctors will NOT be held responsible for a wrong decision or recommendation made based on the recommendations of an artificial intelligence (AI) system. That’s shocking and disturbing at so many levels! Think of the multitude of AI-based decision making possible in banking and financial services, the public sector, and many other industries and the worrying implications wrong decisions could have on the lives of people and society.

One of the never-ending debates for AI adoption continues to be the ethicality and explainability concerns with the systems’ black box decision making. There are multiple dimensions to this issue:

- Definitional ambiguity – Trustworthy, fair and ethical, and repeatable – these are the different characteristics of AI systems in the context of explainability. Most enterprises cite explainability as a concern, but most don’t really know what it means or the degree to which it is required.

- Misplaced ownership – While they can be trained, re-trained, tested, and course corrected, no developer can guarantee bias-free or accurate decision making. So, in case of a conflict, who should be held responsible? The enterprise, the technology providers, the solution developers, or another group?

- Rising expectations – AI systems are being increasingly trusted with highly complex, multi-stakeholder decision-making scenarios which are contextual, subjective, open to interpretation, and require emotional intelligence.

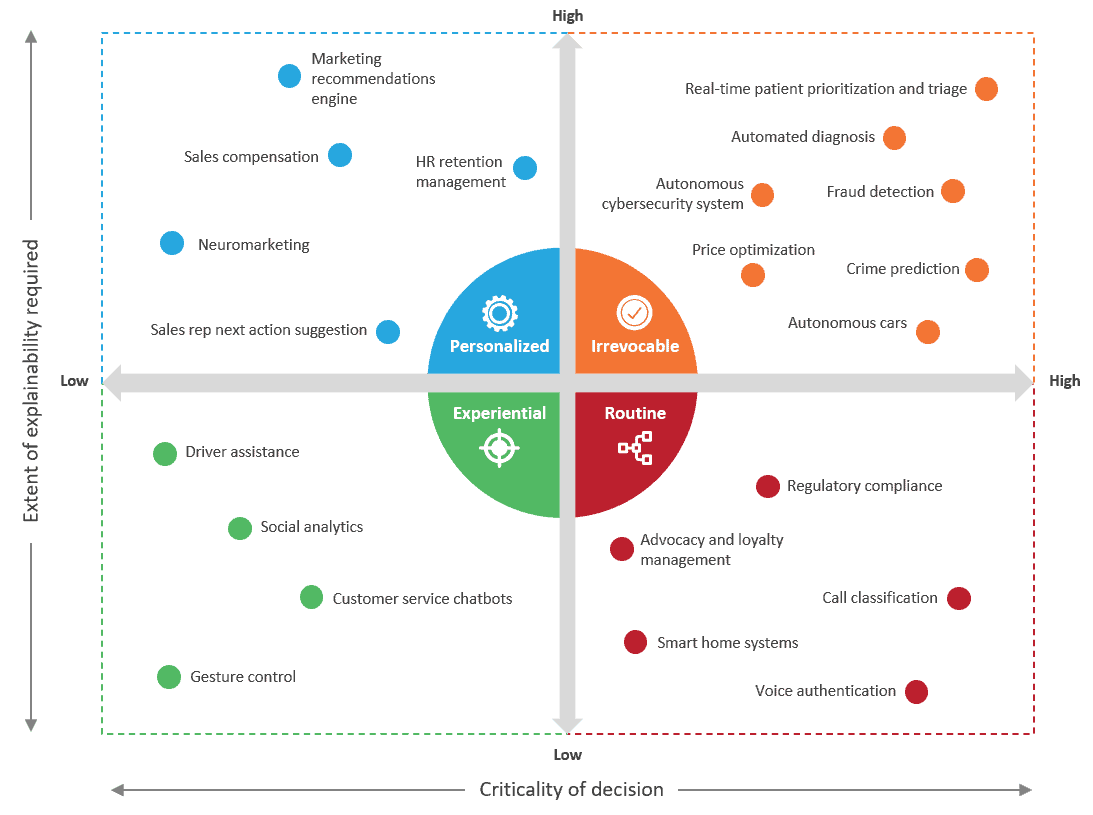

Enterprises, particularly the highly regulated ones, have hit a roadblock in their AI adoption journey and scalability plans considering the consequence of wrong decisions with AI. In fact, one in every three AI use cases fail to reach a substantial scalable level due to explainability concerns.

While the issue may not be a concern for all AI-based use cases, it is usually a roadblock for scenarios with high complexity and high criticality, which lead to irrevocable decisions.

In fact, Hanna Wallach, a senior principal researcher at Microsoft Research in New York City, stated, “We cannot treat these systems as infallible and impartial black boxes. We need to understand what is going on inside of them and how they are being used.”

Progress so far

Last year, Singapore released its Model AI Governance Framework, which provides readily implementable guidance to private sector organizations seeking to deploy AI responsibly. More recently, Google released an end-to-end framework for an internal audit of AI systems. There are many other similar efforts by opponents and proponents of AI alike; however, a feasible solution is still out of sight.

Technology majors and service providers have also made meaningful investments to address the issue, including Accenture (AI fairness Toolkit), HCL (Enterprise XAI Framework), PwC (Responsible AI), and Wipro (ETHICA). Many XAI-centric niche firms that focus only on addressing the explainability conundrum, particularly for the highly regulated industries like healthcare and public sector, also exist. Ayasdi, Darwin AI, KenSci, and Kyndi deserve a mention.

The solution focus varies from enabling enterprises to compare the fairness and performance of multiple models to enabling users to set their ethicality bars. It’s interesting to note that all of these offer bolt-on solutions that enable an explanation of the decision in a human interpretable format, but they’re not embedded explainability-based AI products.

The missing link

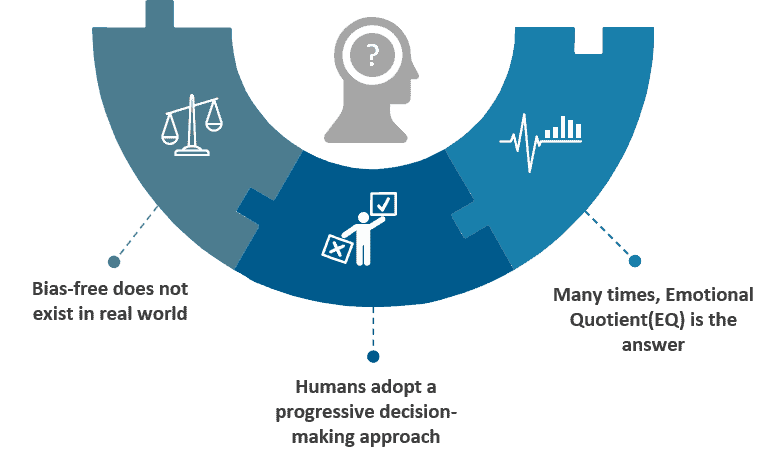

Considering this is an artificial form of intelligence, let’s take a step back and analyze how humans make such complex decisions:

- Bias-free does not exist in the real world: The first thing to appreciate is that humans are not free from biases, and biases by their nature are subjective and open to interpretation.

- Progressive decision-making approach: A key difference between humans and the machines making such decisions is the fact that even with all processes in place, humans seek help, pursue guidance in case of confusion, and discuss edge cases that are more prone to wrong decision making. Complex decision making is seldom left to one individual alone; rather, it’s a hierarchy of decision makers in play, adding knowledge on top of previous insights to build a decision tree.

- Emotional Quotient (EQ): Humans have emotions, and even though most decisions require pragmatism, it’s the EQ in human decisions that explains the outcomes in many situations.

These are behaviors that today’s AI systems are not trained to adopt. A disproportionate focus on speed and cost has led to neglecting the human element that ensures accuracy and acceptance. And instead of addressing accuracy as a characteristic, we add another layer of complexity in the AI systems with explainability.

And even if the AI system is able to explain how and why it made a wrong decision, what good does that do anyway? Who is willing to put money in an AI system that makes wrong decisions but explains them really well? What we need is an AI system that makes the right decisions, so it does not need to explain them.

AI systems of the future need to be designed with these humane elements embedded in their nature and functionality. This may include, pointing out edge cases, “discussing” and “debating” complex cases with other experts (humans or other AI systems), embedding the element of EQ in decision making, and at times even handing a decision back to humans when it encounters a new scenario where the probability of wrong decision making is higher.

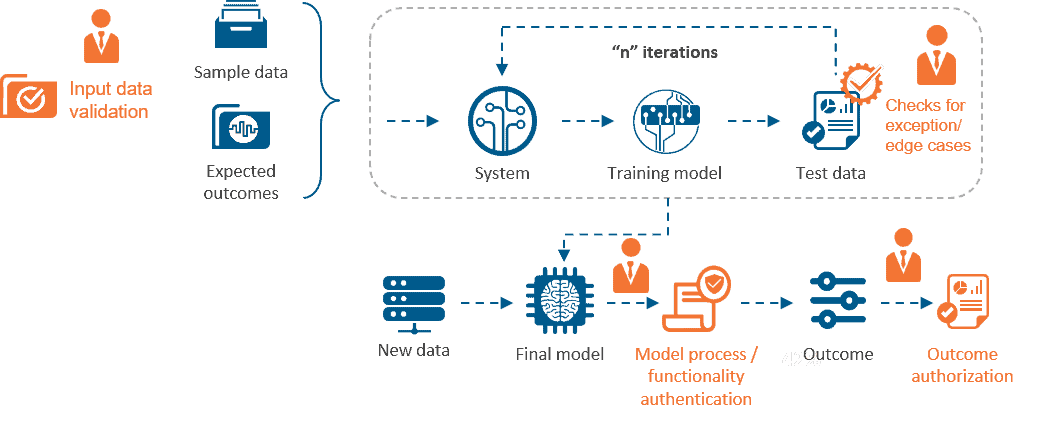

But until we get there, a practical way for organizations to address these explainability challenges is to adopt a hybrid human-in-the-loop approach. Such an approach relies on subject matter experts (SMEs), such as ethicists, data scientists, regulators, domain experts, etc. to

- Improve learning models’ outcomes over time

- Check for biases and discrepancies

- Ensure compliance

In this approach, instead of relying on a large training data set to build the model, the machine learning system is built iteratively with regular inputs from experts.

In the long run, enterprises need to build a comprehensive governance structure for AI adoption and data leverage. Such a structure will have to institute explainability norms that factor in criticality of machine decisions, required expertise, and checks throughout the lifecycle of any AI implementation. Humane intelligence and not artificial intelligence systems are required in the world of the future.

We would be happy to hear your thoughts on approaches to AI and XAI. Please reach out to [email protected] for a discussion.