Blog

Fighting Health Misinformation on Social Media – How Can Enterprises and Service Providers Help Restore Trust

A proposed bill in the US – the Health Misinformation Act – seeks to make social media companies responsible for the spread of incorrect information about vaccines and other health-related claims during the pandemic and raises questions about the liability social media platforms have for the content posted by their users. While social media platforms are shielded from the liability of the content posted by their users (under Section 230 of the Communications Decency Act (CDA)*), this bill would potentially withdraw the liability shield under certain specified circumstances**. How can enterprises move forward in this changing regulatory environment, and what role can service providers play in helping platforms adapt to the new realities and restore trust? To find out, read on.

What is the impact of health misinformation?

Health-related misinformation can be fatal. According to the World Health Organization (WHO), in the first three months of 2020, nearly 6,000 people globally had to be hospitalized, and 800 people lost their lives due to coronavirus misinformation.

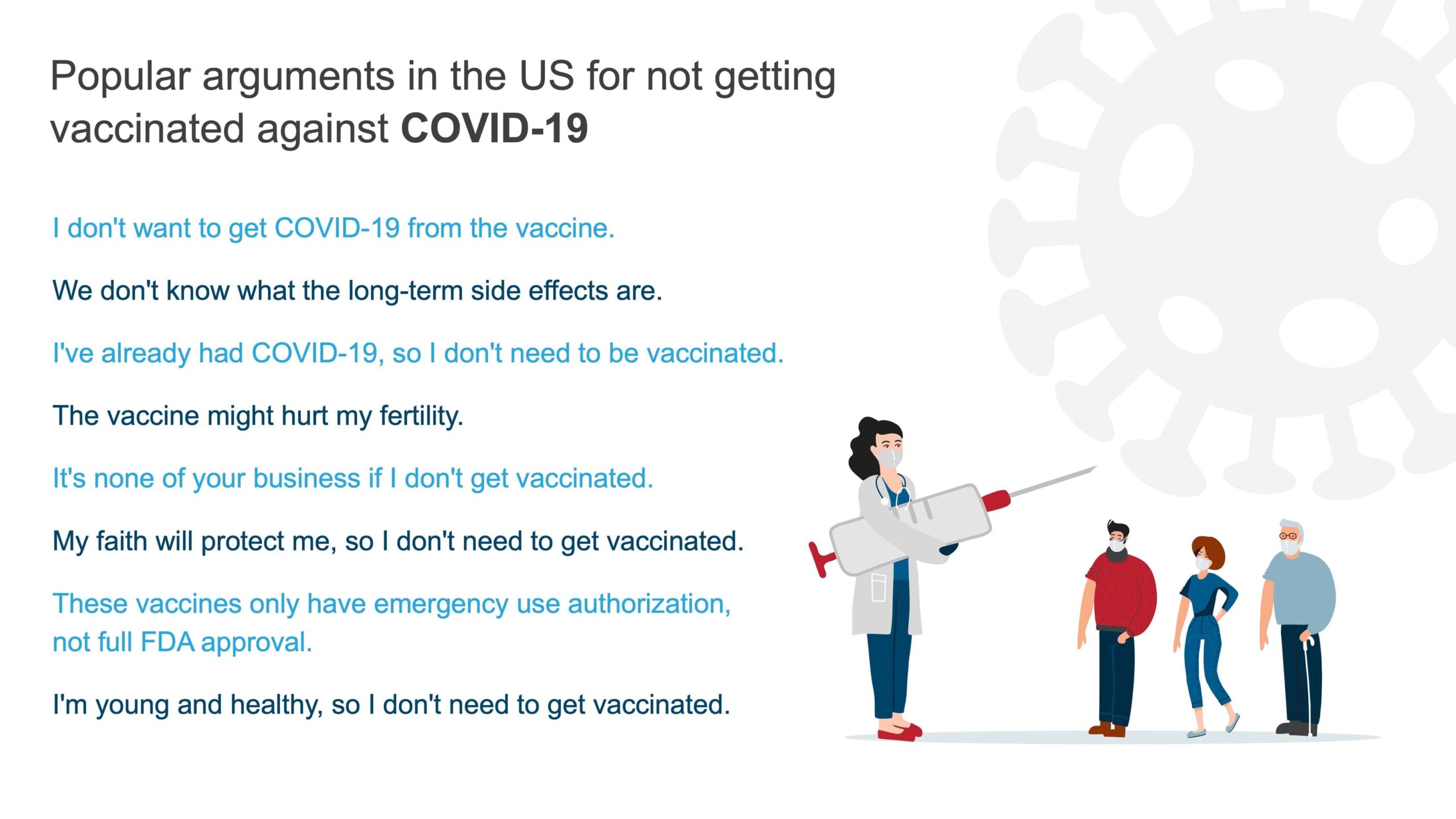

In today’s digital age, such infodemic – an overabundance of typically unreliable information that spreads rapidly alongside a disease outbreak – can result in instilling distrust and the dismissal of proven public health measures, including vaccines. Case in point, data shows that as many as 99% of the new coronavirus deaths in the US are among the unvaccinated population.

Current health misinformation prevalence in social media

Social media platforms continue to grapple with health misinformation. Here are some recent examples:

- YouTube had removed more than 800,000 coronavirus misinformation-related videos from its platform from March 2020 to March 2021. However, as of July 22, six of the 12 anti-vaccine activists responsible for creating more than half of the anti-vaccine-related content online were still searchable and were posting videos

- Facebook still struggles to prevent vaccine misinformation from being shown to its users. In a June 2021 experiment by an advocacy group on how anti-vaccine content is propagated, two newly created experimental accounts on Facebook were recommended 109 pages with anti-vaccine information in just two days

- A report by the London-based think tank, Institute for Strategic Dialogue, stated that a TikTok feature that can be used for adding another person’s audio to one’s video is being used for promoting misleading information about COVID-19 vaccines

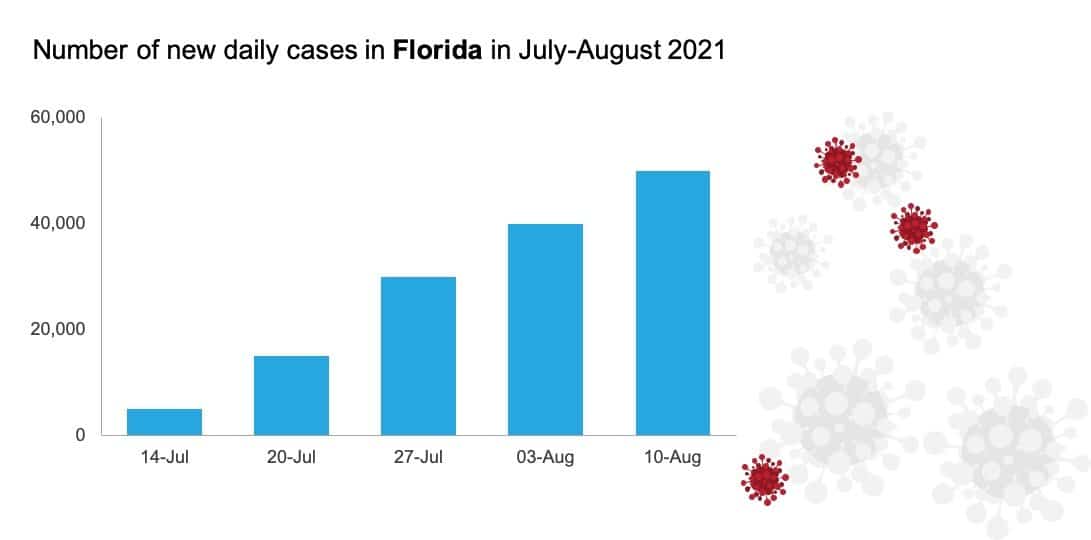

Unfortunately, the US has been witnessing a surge in COVID-19 cases. A major portion of this rise is in parts of the US where vaccination rates are low, and misinformation regarding the vaccine is reported as a contributing factor to these low numbers.

As shown in the below graph, Florida is grappling with a rise in COVID cases due to vaccine hesitancy and misinformation.

The connection between vaccine misinformation and low vaccination numbers in the US has been cited by US President Joe Biden, who has pointed to social media platforms for spreading falsehood about the virus and the vaccine.

The Centers for Disease Control and Prevention Director Rochelle Walensky also had cautioned that COVID-19 is “becoming a pandemic of the unvaccinated.”

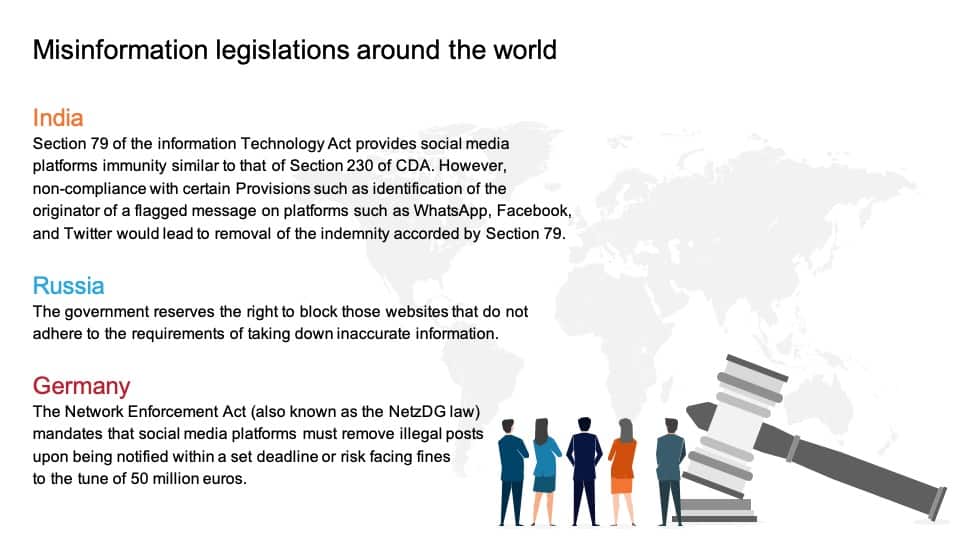

Misinformation legislation globally

The Health Misinformation Act would not be the first legislation in the world to hold social media platforms responsible for misinformation on their platforms. The Trust and Safety (T&S) practices of enterprises, especially social media platforms, have been increasingly encountering legal regulations.

Several other countries are looking at ways to hold enterprises accountable for the content they host. Here are some examples of legislation against online misinformation around the world:

What is the way forward for enterprises?

Enterprises need to quickly adapt to the regulatory changes that compel them to assume higher responsibility for the content they host. Enterprises can take the following steps to transform themselves to the new realities including:

- Setting up a dedicated war room of moderators to tackle COVID-19 related misinformation

- Hiring moderators with dedicated expertise who can help them spot and identify health-related misinformation

- Seeking assistance from medical professionals to undertake training of their automated moderation Artificial Intelligence (AI)/Machine Learning (ML) systems to identify and remove misinformation

- Collaborating with other enterprises to identify dubious content using cross-platform information sharing and collectively tackling their common enemy – misinformation

- Being agile enough to quickly adapt their policies to local content laws

- Becoming aware of country-level laws and devising universal content policies since the same nature of user-generated content (UGC) may be considered legal in a certain region and illegal in another

What role can T&S service providers play in enabling enterprises to adjust to the new regulatory realities?

Service providers who are responsible for ensuring T&S will continue to play increasingly important roles in partnering with platforms to help them adapt to regulatory changes and emerge with strengthened T&S functions.

Here are some ways service providers can evolve their offerings to enable enterprises under these dynamic circumstances:

- Proactively offer content policy consulting to help enterprises make better UGC moderation decisions

- Help enterprises meet their specialized talent requirements for their T&S functions in light of the new legislations

- Offer AI technology solutions that can be trained to help enterprises identify and remove health-related misinformation from their platforms

Global regulations put a larger responsibility on enterprises to ensure the wellbeing of the people they connect and bring together through their platforms. Maintaining custom and agile T&S operations has become the need of the hour for organizations. To help enterprises with their T&S needs, service providers are evolving their offerings and becoming partners of choice in these trying times.

The bottom line

User-generated content has nuances and needs contextualization for accurate interpretation, and hence, online content moderation decisions are not always black and white. As a result, it is critical that enterprises, service providers, health experts, regulators, and all concerned civil society groups come together and collectively form policies that can help remove health-related misinformation swiftly before it reaches and influences more people.

A multi-stakeholder approach, in the form of an independent review board, consisting of experts from different walks of life, can be a promising way forward for all parties involved to take on the onerous challenge of defeating health misinformation.

*Section 230

Currently, under US laws, social media platforms cannot be held liable for the user-content on their platforms, based on Section 230 of the CDA, whose provisions state:

“No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

**Under the proposed Health Misinformation Act, social media entities would be devoid of the immunity granted by Section 230 of CDA only when they are found guilty of boosting the post algorithmically (post shown more to users due to engagement versus if such health-related misinformation is shown to users chronologically).

To share your thoughts on responses to misinformation in social media, contact us at [email protected].