Leap Towards General AI with Generative Adversarial Networks | Blog

AI adoption is on the rise among enterprises. In fact, the research we conducted for our AI Services State of the Market Report 2021 found that as of 2019, 72% of enterprises had embarked on their AI journey. And they’re investing in various key AI domains, including computer vision, conversational intelligence, content intelligence, and various decision support systems.

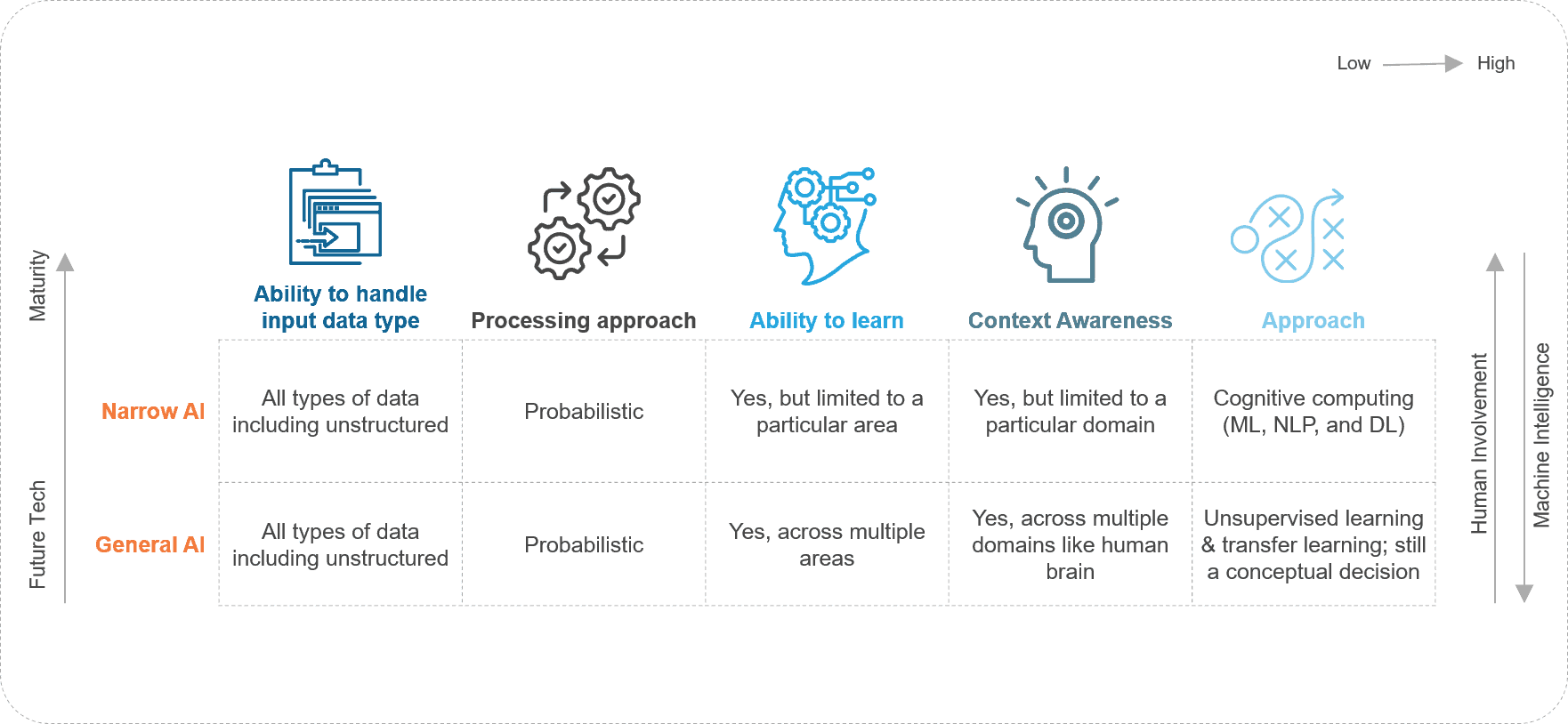

However, the machine intelligence that surrounds us today belongs to the Narrow AI domain. That means it’s equipped to tackle only a specified task. For example, Google Assistant is trained to respond to queries, while a facial recognition system is trained to recognize faces. Even seemingly complex applications of AI – like self-driving cars – fall under the Narrow AI domain.

Where Narrow AI falters

Narrow AI can process a vast array of data and complete the given task more efficiently; however, it can’t replicate human intelligence, their ability to reason, humans’ ability to make judgments, or be context aware.

This is where General AI steps in. General AI takes the quest to replicate human intelligence meaningfully ahead by equipping machines with the ability to understand their surroundings and context.

Exhibit 1: Evolution of AI

The pursuit of General AI

Researchers came up with Deep Neural Networks (DNN), a popular AI structure that tries to mimic the human brain. DNNs work with many labeled datasets to perform their function. For example, if you want the DNN to identify apples in an image, you need to provide it with enough apple images for it to clean the pattern to define the general characteristics of an apple. It can then identify apples in any image. But, can DNNs – or more appropriately, General AI – be imaginative?

Enter GANs

Generative Adversarial Networks (GAN) bring us close to the concept of General AI by equipping machines to be “seemingly” creative and imaginative. Let’s look at how this concept works.

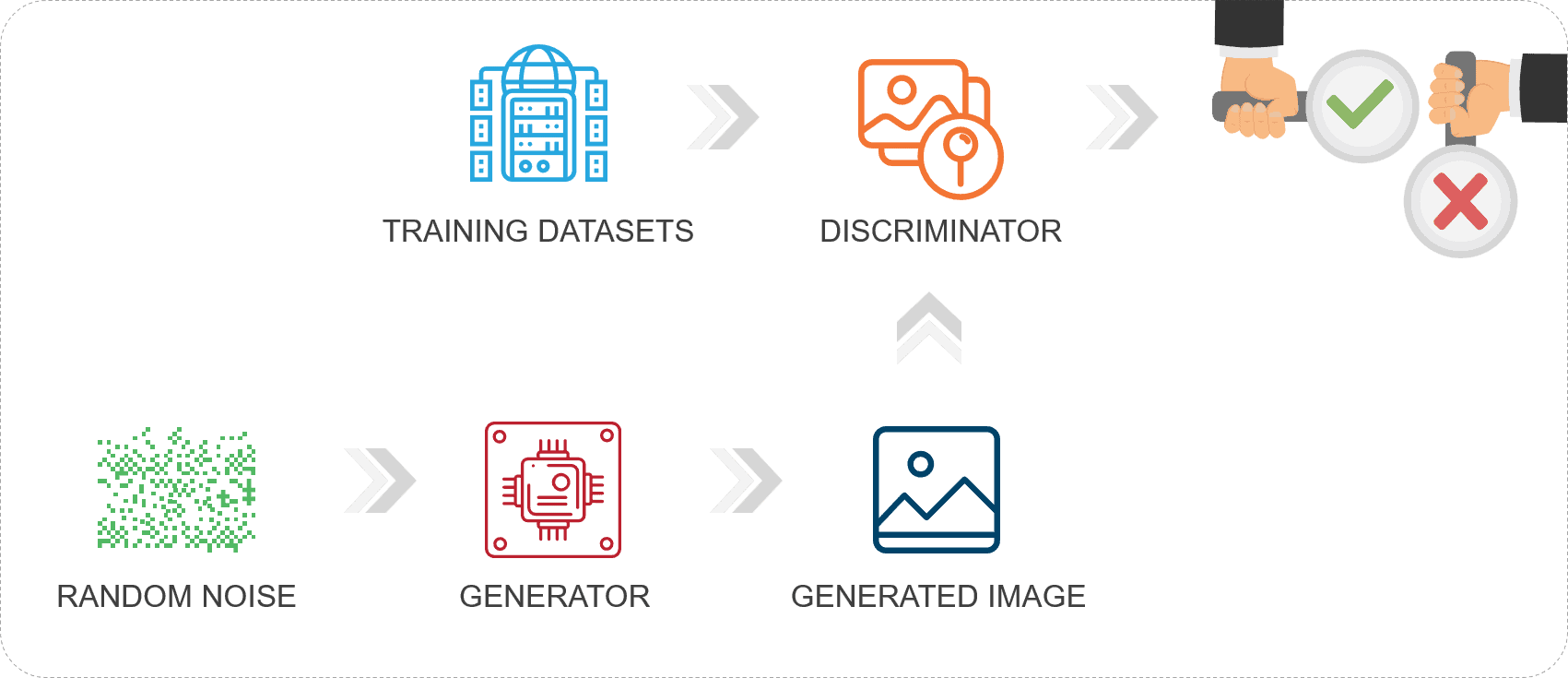

Exhibit 2: GAN working block diagram

GANs work with two neural networks to create and refine data. The first neural network is placed in a generator that maps back from the output to create the input data used to create the output. A discriminator has the second network, which is a classifier. It provides a score between 0 to 1. A score of 0.4 means that the probability of the generated image being like the real image is 0.4. If the obtained score is close to zero, it goes back to the generator to create a new image, and the cycle continues until a satisfactory result is obtained.

The goal of the generator is to fool the discriminator into believing that the image being sent is indeed authentic, and the discriminator is the authority equipped to catch whether the image sent is fake or real. The discriminator acts as a teacher and guides the generator to create a more realistic generated image to pass as the real one.

Applications around GAN

The GAN concept is being touted as one of the most advanced AI/ML developments in the last 30 years. What can it help your business do, other than create an image of an apple?

- Creating synthetic data for scaled AI deployments: Obtaining quality data to train AI algorithms has been a key concern for AI deployments across enterprises. Even BigTech vendors such as Google, which is considered the home of data, struggle with it. So Google launched “Project Nightingale” in partnership with Ascension, which created concerns around misuse of medical data. Regulations to ensure data privacy safeguard people’s interests but create a major concern for AI. The data to train AI models vanishes. This is where a GAN shines. It can create synthetic data, which helps in training AI models

- Translations: Another use case where GANs are finding applications is in translations. This includes image to image translation, text to image, and semantic image to photo translation

- Content generation: GANs are also being used in the gaming industry to create cartoon characters and creatures. In fact, Google launched a pilot to utilize a GAN to create images; this will help gaming developers be more creative and productive

Two sides to a coin

But, GANs do come with their own set of problems:

- A significant problem in productionizing a GAN is attaining a symphony between the generator and the discriminator. Too strong or too weak a discriminator could lead to undesirable results. If it is too weak, it will pass all generated images as authentic, which defeats the purpose of GAN. And if it is too strong, no generated image would be able to fool the discriminator

- The amount of computing power required to run a GAN is way more significant as compared to a generic AI model, thus limiting its use by enterprises

- GANs, specifically cyclic and pix2pix types, are known for their capabilities across face synthesis, face swap, and facial attributes and expressions. This can be utilized to create doctored images and videos (deep fakes) that usually pass as authentic have become an attractive point for malicious actors. For example, a politician expressing grief over pandemic victims could be doctored using GAN to show a sinister smile on the politician’s face during the press briefing. Just imagine the amount of backlash and public uproar that would generate. And that is just a simple example of the destructive power of GANs

Despite these problems, enterprises should be keen to adopt GANS as they have the potential to disrupt the business landscape and create immense competitive stance opportunities across various verticals. For example:

- A GAN can help the fashion and design industry create new and unique designs for high-end luxury items. It can also create imaginary fashion models, thus making it unnecessary to hire photographers and fashion models

- Self-driving cars need millions of miles of road to gather data to test their detection capabilities using computer vision. All the time spent gathering the road data can be cut short through synthetic data generated through GAN. That, in turn, can enable faster time to market

If you’ve utilized GANs in your enterprise or know about more use cases where GANs can be advantageous, please write to us at [email protected] and [email protected]. We’d love to hear your experiences and ideas!